AI + Hardware, the Next Decade Will Reshape the Landscape

In March 2026, thirty researchers from UIUC, Stanford, UCLA, NVIDIA, Google, IBM, and other institutions published a single vision paper under the leadership of Deming Chen at UIUC. The title: “AI+HW 2035: Shaping the Next Decade.” It covers how AI and hardware need to co-evolve over the next ten years. This is not a paper reporting experimental results. It reads more like a consensus declaration from industry and academia on where things need to go.

Here is why it matters, what it actually says, and what investment signals you can pull from it.

1. The Energy Crisis in AI: Why This Paper Exists Now

Every time a new generation of AI models arrives, the compute requirements jump by an order of magnitude. From GPT-3 to GPT-4, and from GPT-4 to whatever comes next, the energy, memory, and network bandwidth needed for training grow exponentially.

The problem is that hardware progress cannot keep up. Moore’s Law has been slowing for years, and simply stacking more GPUs hits a wall in cost and power. The paper makes this diagnosis clearly. The future of AI does not hinge on making models smarter. It hinges on achieving the same intelligence with less energy.

This is where the central concept of “intelligence per joule” comes in.

A joule is a unit of energy. Running a 100-watt light bulb for one second consumes 100 joules. The argument is that raw FLOPS or model size will matter less and less. What becomes the real competitive metric is how much meaningful performance you can extract from a single unit of energy.

This is not an academic exercise. In an era where data center power costs determine AI business profitability, efficiency is competitive advantage. The winner is not the company that buys the most GPUs. It is the company that squeezes the most inference out of the same wattage.

2. The Real Bottleneck Is Not Compute. It Is Data Movement.

When people talk about AI performance, the instinct is to focus on compute: how many TFLOPS the GPU does, how many cores it has. The paper points the finger somewhere else. While bottlenecks like thermal management, power delivery, and interconnects all exist and are addressed in Section 5, the paper identifies data movement as the most fundamental constraint.

Moving data costs more energy than actually computing with it.

In current computing architectures, data lives in memory and has to be physically transferred to the processor before any computation can happen. That transfer is where enormous amounts of energy and time get wasted. The industry calls this the “memory wall.”

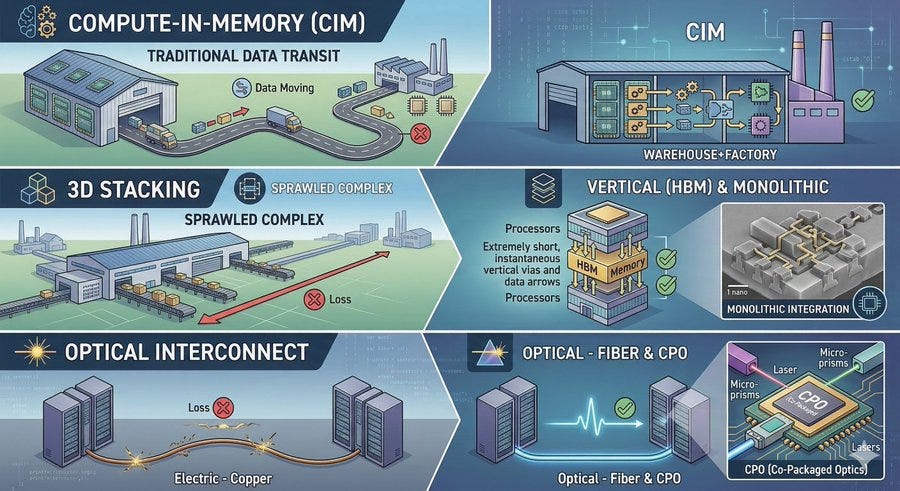

The paper lays out three directions for solving this bottleneck. Let me use an analogy to make each one concrete.

Compute-in-Memory (CIM). Today, goods (data) are trucked from a warehouse (memory) to a factory (processor), where assembly (computation) happens. CIM flips the model. Do the assembly inside the warehouse so you never need the truck. CIM is the technology that performs computation directly where data is stored.

3D stacking. Instead of expanding the factory outward, stack it upward. Memory and processors are stacked vertically, collapsing the physical distance between them. HBM is the most prominent example of this approach today. The paper extends the roadmap further, to 3D monolithic integration, which means vertical integration starting at the transistor level.

Optical interconnects. Connect chip to chip and server to server using light instead of electrical signals. Electrical signals lose energy rapidly over distance. Light does not. This is why Co-Packaged Optics (CPO) and silicon photonics are getting so much attention.

Put it all together and the picture becomes clear. HBM, CPO, and CIM are not separate trends. They are three different approaches to the same underlying problem: the energy cost of moving data. One paper, one framework, and the whole landscape snaps into focus.

3. AI and Hardware Have to Evolve Together

The central argument of this paper comes down to one point. You cannot develop AI software and hardware separately. They need to be designed together. This is what the field calls co-design.

The current reality looks like this. AI algorithm researchers assume the GPU will just get faster and design their models accordingly. Chip designers optimize their hardware for whatever model architecture is popular right now. The problem is that a chip takes two to three years to design, while AI model paradigms shift every six months. By the time the chip is ready, the world has already moved on.

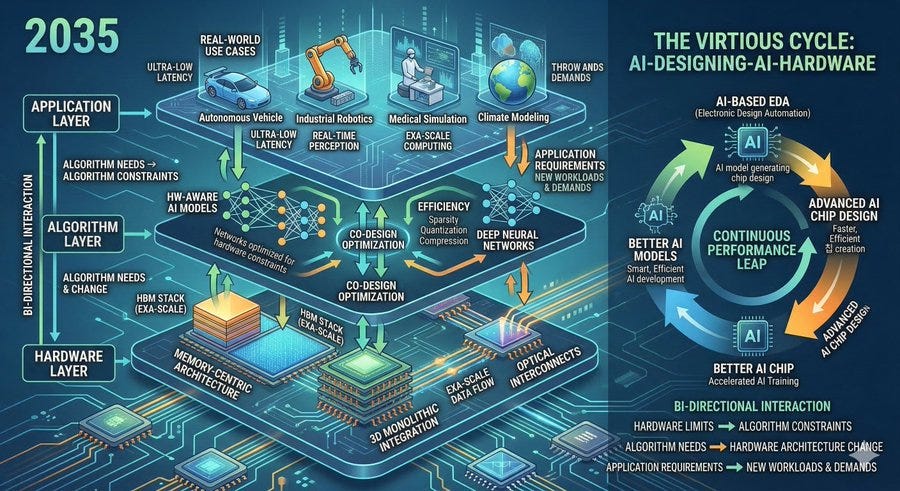

The paper proposes a three-layer framework to address this.

At the bottom is the hardware layer: memory-centric architectures, 3D stacking, optical interconnects. The physical breakthroughs. In the middle is the algorithm layer: AI models that are aware of hardware constraints and operate efficiently within them. At the top is the application layer: real use cases like robotics, autonomous driving, and scientific simulation that push new requirements back down to the algorithm and hardware layers below.

The key insight is that these three layers are not one-directional. They feed back into each other. Hardware constrains what algorithms can do. Algorithms shape how hardware gets designed. Applications create new demands on both.

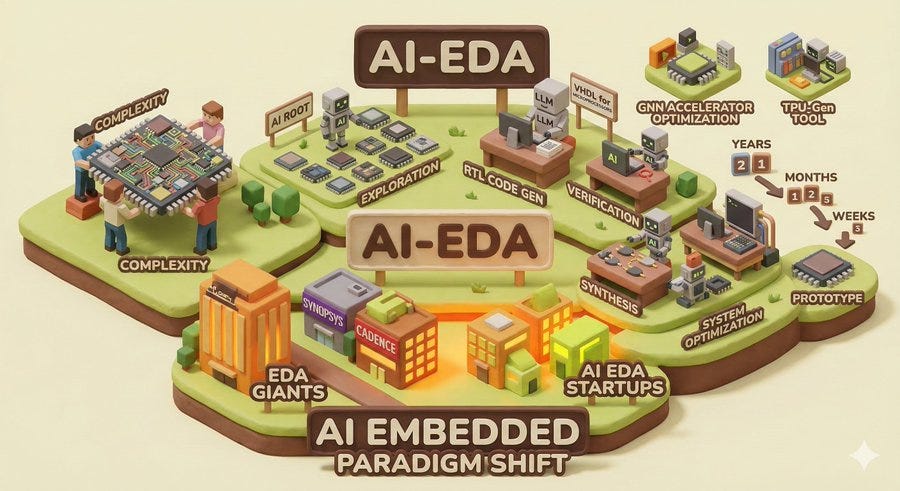

One of the more interesting pieces of the paper is its vision for AI designing AI hardware. The paper treats AI-driven EDA (Electronic Design Automation) as essential infrastructure for future hardware development. Chip design has grown too complex for humans to manage purely through manual effort. AI designs the chip. That chip enables better AI training. The loop reinforces itself. This is the 2035 that the paper describes.

4. What Does Success Look Like in 10 Years?

The paper defines success across four dimensions.

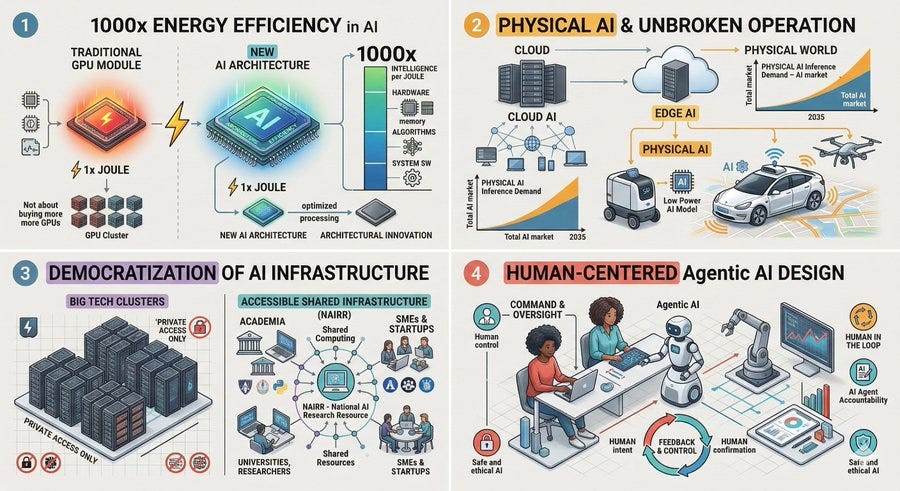

1000x improvement in AI training and inference efficiency. This means doing 1000x more AI computation on the same power budget we have today. That is categorically different from buying 1000x more GPUs. It requires simultaneous optimization across every layer: hardware architecture, algorithms, and system software. Note that this 1000x target is measured in energy efficiency (intelligence per joule), not raw performance. It is a separate metric from the reliability degradation discussed in Section 5-5.

Seamless AI from cloud to edge to the physical world. The paper puts significant emphasis on “Physical AI.” Not AI running inside data centers, but AI operating in real time in the physical world: robots, autonomous vehicles, drones. The paper’s projection is that by 2035, Physical AI will account for the majority of total AI inference demand. That means efficient AI models running on low-power, compact processors become a necessity, not a luxury.

Democratization of AI infrastructure access. Right now, cutting-edge AI research is concentrated in big tech companies with massive GPU clusters. The paper argues that universities and smaller organizations need access to world-class AI infrastructure as well. It points to initiatives like the US government’s NAIRR (National AI Research Resource) as concrete mechanisms for expanding shared access.

Human-centric design principles. As agentic AI, systems that make autonomous decisions and take actions, becomes widespread, how humans and AI collaborate and communicate becomes a core design challenge. The technology can advance, but the architecture must keep humans in control.

5. Investment Signals for Investors and Engineers

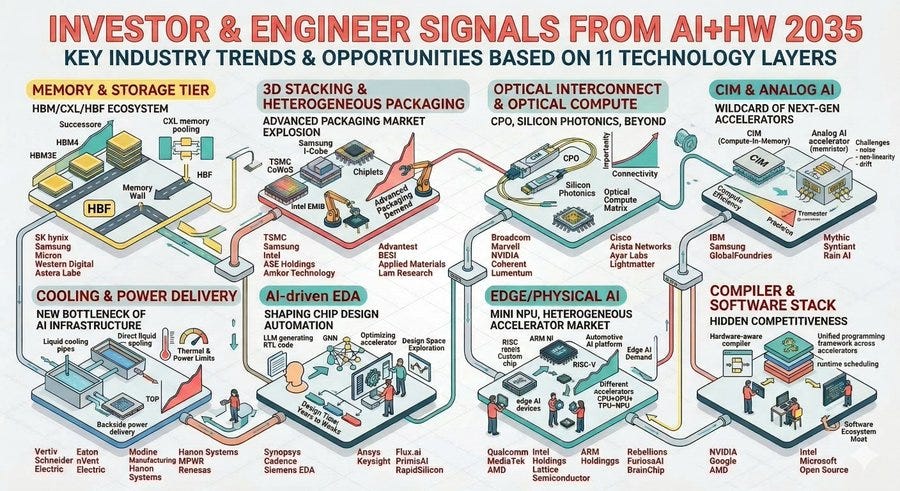

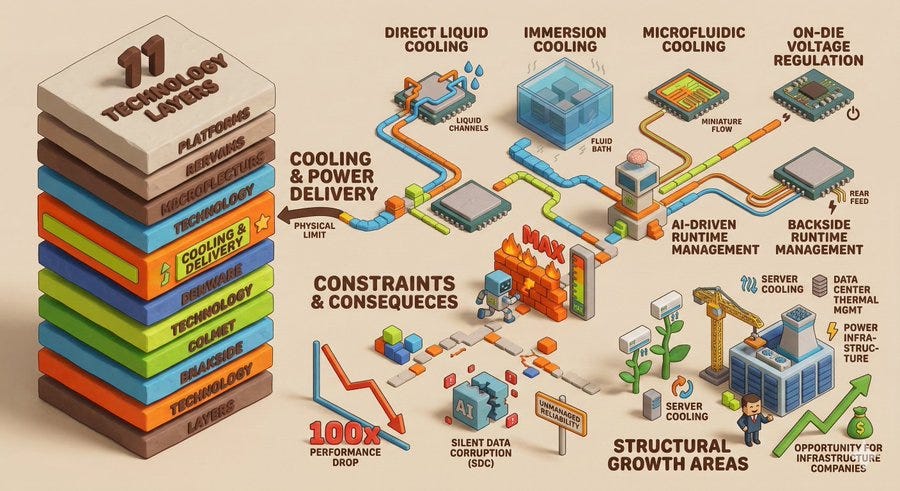

The signals embedded in this vision paper are concrete. The paper defines 11 distinct technology layers and summarizes the key technologies, trends, and challenges for each in Table 1. What follows draws from that table, with a clear distinction between what the paper directly states and the industrial implications I am layering on top.

One caveat worth stating upfront. This paper does not recommend specific companies or products. Any company names mentioned below are my interpretation of where the paper’s stated technology directions map onto today’s industry landscape. Similarly, terms like CXL, CPO, and HBF are my translations of the paper’s higher-level concepts (unified memory, photonic interconnect, memory-flash hybrid) into the closest current industry equivalents. Keep that distinction in mind as you read.

5-1. Memory and Storage Hierarchies: HBM, CXL, and HBF

The paper classifies “Memory and Storage Hierarchies” as a distinct technology layer and explicitly names HBM and its successors, unified memory models between CPUs and accelerators, memory-plus-flash hybrids (such as High Bandwidth Flash), memory compression and caching and tiering strategies, and persistent memory (NVRAM) as core technologies.

The consensus that the memory wall is the fundamental bottleneck implies that HBM demand will grow structurally for the long term. The SK Hynix versus Samsung HBM competition is not a short-term trend. It is the opening chapter of a decade-long megatrend. The paper’s explicit reference to HBM and its successors means the roadmap extends well beyond HBM3E, through HBM4 and further.

Worth noting is that the paper calls out memory-flash hybrids as a separate item. The paper’s language is “emerging memory and flash hybrids” without naming specific products. But SK Hynix’s HBF (High Bandwidth Flash) is the closest current industry implementation of this direction. HBM is expensive and capacity-constrained. As large AI model parameter counts keep growing, there is a natural demand for a middle-tier memory layer: not quite as fast as HBM, but far larger and cheaper. The gap is real and so is the commercial opportunity.

CXL (Compute Express Link) for memory pooling fits into the same picture. The paper identifies “unified memory models” and “disaggregated resources” as key trends, and CXL is the current industry standard for realizing them. The direction is away from each processor owning its own private memory and toward memory as a shared, pooled resource.

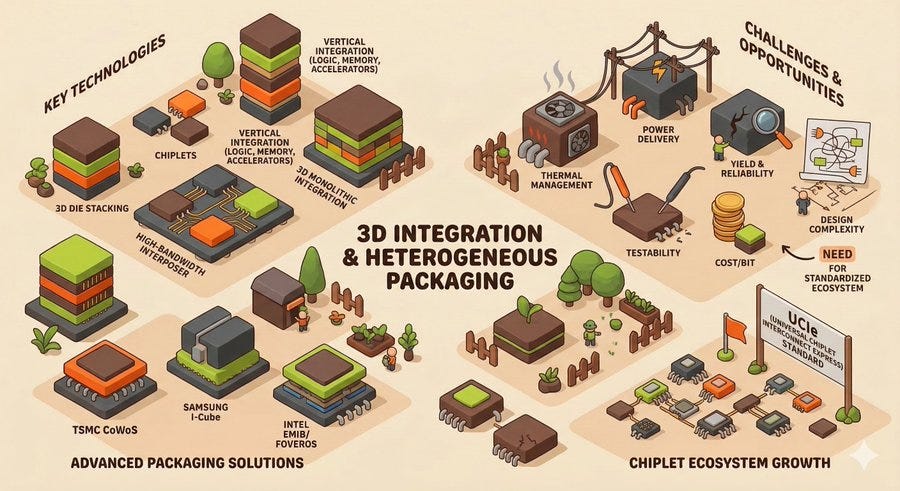

5-2. 3D Integration and Heterogeneous Packaging: Advanced Packaging Explodes

The paper treats “3D Integration and Heterogeneous Packaging” as a separate layer, with 3D monolithic integration, 3D die stacking, chiplets, high-bandwidth interposers, and vertical integration of logic plus memory plus accelerators as its core technologies.

The challenges the paper is candid about are themselves investment signals. Thermal management, power delivery, yield and reliability, design complexity, testability, cost per bit, and the absence of standardized low-latency interconnect and packaging ecosystems. Each of these is a problem that still needs solving, and every solved problem is a market for whoever solves it.

TSMC’s CoWoS, Samsung’s I-Cube, and Intel’s EMIB and Foveros are the core infrastructure for this layer. As the chiplet ecosystem matures, packaging technology becomes increasingly central. The paper’s emphasis on the need for a standardized low-latency interconnect and packaging ecosystem is a signal that standards like UCIe (Universal Chiplet Interconnect Express) have room to grow into.

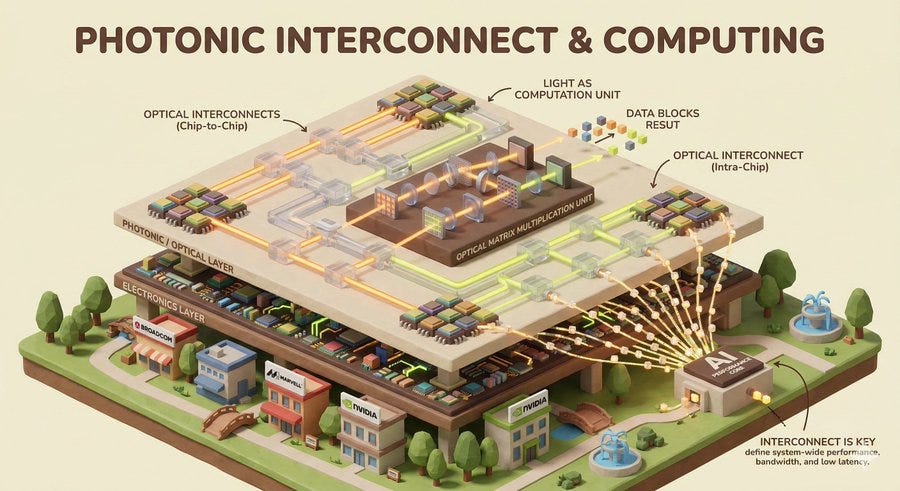

5-3. Optical Interconnects and Photonic Computing: CPO, Silicon Photonics, and Beyond

The paper gives “Photonic and Optical Interconnect and Compute” its own dedicated layer. Core technologies include inter-chip and intra-chip optical interconnects, optical accelerators for matrix multiplication, electro-optic co-design, and in-network optical computing.

What is interesting is that the paper does not limit optical technology to data transport. It envisions photonic components becoming new primitive units for linear algebra and signal processing. Light performing matrix operations directly, not just carrying data. The paper is realistic about the difficulty: integrating optical and electronic circuits remains a central unsolved problem.

The paper itself uses “photonic interconnect” and “electro-optic co-design” as its framing rather than industry terms like CPO or silicon photonics. But Broadcom, Marvell, and NVIDIA, all currently leading in CPO and silicon photonics investment, have researchers in the authorship of this paper. That itself is evidence that the industry views optical interconnects as a long-term necessity, not a speculative bet.

The paper also states plainly that interconnects matter as much as computation. Interconnects used to be treated as a processor’s accessory. But in a world where AI models are distributed across thousands of chips, bandwidth, latency, and topology become primary determinants of whole-system performance.

5-4. CIM and Analog AI: The Wild Card in Next-Generation Accelerators

The paper classifies “Analog, Mixed-Signal, and In-Memory Compute” as a distinct layer and assesses it as having the potential to significantly raise compute efficiency while cutting the energy cost of data movement. Core technologies listed are CIM, analog AI accelerators (memristors, resistive crossbars), and digital-analog hybrid circuits.

But the paper is honest about the barriers. Noise, nonlinearity, precision, and calibration remain unsolved. Large-scale crossbar structures face persistent problems with nonlinearity, noise, and drift. Commercial deployment at scale is still years away.

So why does it matter? The paper’s argument centers on the co-design opportunity. The fundamental limitation of CIM, which is imprecise computation, can be treated as a feature rather than a bug if paired with the right algorithm. Probabilistic models and algorithms with tolerance for approximate computation can trade a small amount of accuracy for dramatic gains in energy efficiency. That kind of system-level optimization is only possible through co-design. Neither hardware nor software alone gets you there.

From an investment perspective, this is a 6-to-10-year horizon, not 2-to-5. But if it scales successfully, the energy efficiency advantage over conventional digital accelerators could be transformative.

5-5. Cooling and Power Delivery: The New Bottleneck in AI Infrastructure

The fact that the paper dedicates one of its 11 technology layers specifically to “Cooling and Power Delivery” is itself a statement. Thermal and power constraints now set the physical ceiling on AI system performance.

Core technologies cited include direct liquid cooling, immersion cooling, microfluidic cooling, on-die voltage regulation, backside power delivery, and AI-driven runtime thermal and power management.

The paper’s diagnosis is specific. Power and thermal limits now directly constrain AI system performance, scalability, and reliability. On the system side, it warns that without proper reliability management, problems like silent data corruption can degrade performance by up to 100x. This is a separate figure from the 1000x efficiency improvement target discussed earlier. That 1000x is a goal. This 100x is a warning about what happens when thermal management fails.

The implication is that the opportunity in AI infrastructure extends well beyond the semiconductor chip itself. As AI GPU TDP keeps climbing, server cooling, data center thermal management, and power infrastructure are structurally growing markets.

5-6. AI-Driven EDA: Chip Design Automation Gets Disrupted

The paper treats “AI-driven electronic design automation” as a prerequisite for hardware innovation. Chip design has already grown too complex to manage through purely human effort.

The vision includes LLMs for design space exploration, RTL code generation, verification, synthesis, and system-level optimization. The paper cites concrete examples: research using LLMs to generate VHDL code for high-performance microprocessors, GNN-based automated accelerator optimization, and LLM-driven TPU auto-design tools (TPU-Gen).

The projection is that combining these capabilities could compress the time from concept to prototype from years down to months, and eventually weeks.

Synopsys and Cadence are already moving in this direction. At the same time, the market is opening for LLM-based chip design automation startups. When the paper calls for embedding AI at the core of design methodology, it is not projecting incremental improvement to existing EDA workflows. It is calling for a fundamental paradigm shift.

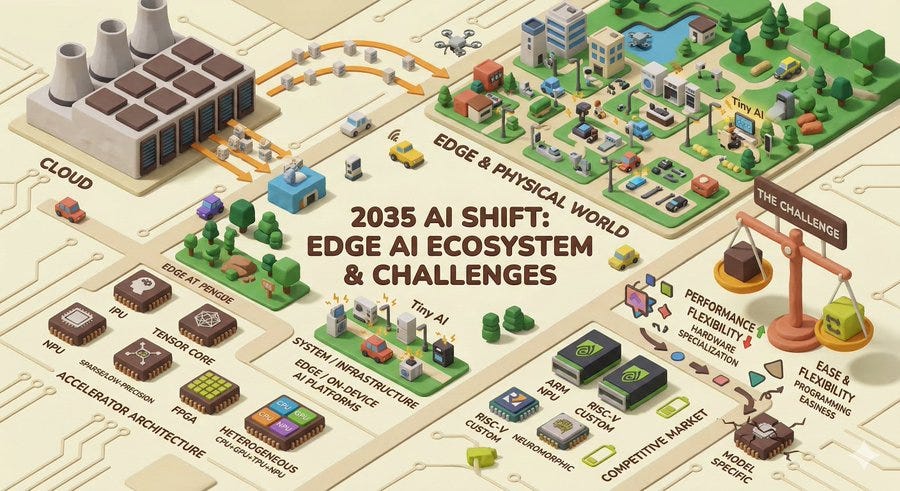

5-7. Edge and Physical AI: Small NPUs and Heterogeneous Accelerators Scale Up

If the majority of inference demand by 2035 comes from the edge and the physical world, the center of gravity in the semiconductor market shifts.

The paper’s “Accelerator Architecture” layer lists domain-specific accelerators (tensor cores, NPUs, IPUs), low-precision and sparse architectures, reconfigurable architectures (FPGAs, CGRAs), and heterogeneous architectures (CPU plus GPU plus TPU plus NPU combinations) as core technologies. Its “System and Infrastructure” layer explicitly names “edge and on-device AI platforms (tiny AI).”

Small, low-power, high-efficiency AI processors become a market on par with large data center GPUs. ARM-based NPUs, RISC-V-based custom processors, and neuromorphic chips will all compete for this space.

The challenge the paper calls out matters here too. Programmability and flexibility remain core obstacles. Specializing hardware raises performance but reduces flexibility. In an environment where AI models evolve rapidly, an accelerator optimized for one specific model risks obsolescence quickly. Where to draw the line between performance and flexibility is the central strategic question for edge AI chip companies.

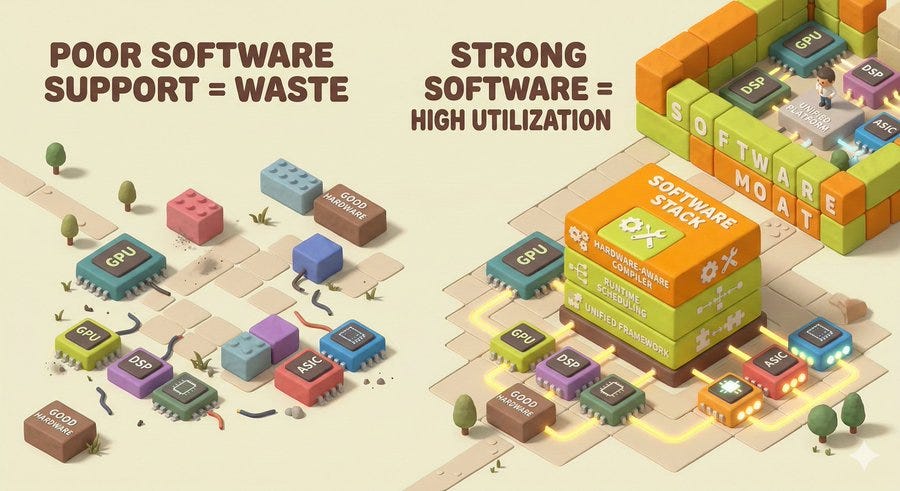

5-8. Compilers and Software Stacks: The Hidden Competitive Moat

One area that is easy to overlook but which the paper explicitly warns about: in heterogeneous, memory-centric systems, inadequate software support leads to severe underutilization of hardware.

No matter how good the hardware is, a software stack that cannot keep up will drag performance down. Hardware-aware compilers, unified programming frameworks across heterogeneous accelerators, and runtime scheduling optimization are what the paper identifies as the hidden determinants of system performance.

This is also the essence of what NVIDIA has built with CUDA. NVIDIA’s GPU hardware is powerful, but the CUDA software ecosystem is the stronger moat. In the coming age of heterogeneous architectures, the companies with software platforms that can unify diverse processors under a single programming model will have a lasting structural advantage.

Closing Thoughts

The value of this paper is not that it argues for any particular technology. It is that institutions with very different interests, including NVIDIA, Google, IBM, Stanford, and UIUC, converged on a shared view of the next ten years. That convergence itself is the signal.

The message is clear. The era of “bigger and more” is giving way to the era of “more efficient and more tightly integrated.” Understanding this transition is what makes the next decade of the semiconductor industry legible.

Source: Deming Chen et al., “AI+HW 2035: Shaping the Next Decade,” arXiv:2603.05225, March 2026.

Love this peek into AI’s hardware revolution! Photonics could be the game-changer (light beats electrons every time), but don’t sleep on neuromorphic chips mimicking brains for ultra-low power. What’s your bet: Who wins the next chip war NVIDIA, custom ASICs, or a surprise wildcard? 🚀